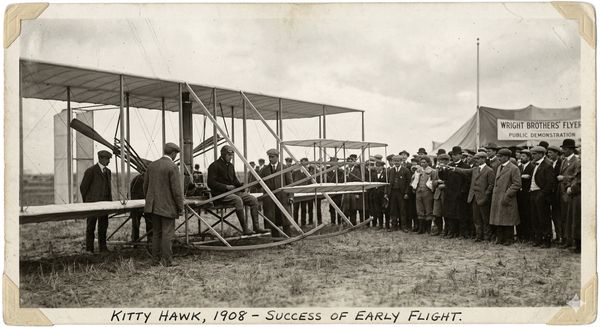

In 1903, the Wright Brothers flew at Kitty Hawk. In 1909, Louis Blériot crossed the English Channel. In 1927, Charles Lindbergh crossed the Atlantic. Each milestone didn’t just prove the technology — it proved the model. A new mode of transportation was real, viable, and increasingly systematizable.

We are at a roughly equivalent moment in software delivery. The Wright Brothers phase of agentic AI — chaotic, improvised, held together by the same people who built the plane and flew it — is ending. The Blériot phase is here. The question is who is doing the systematizing, and whether the frameworks we’ve inherited from the age of land-based travel are fit for the new medium.

I’ve spent years working inside SAFe — the Scaled Agile Framework — first earning my Scaled Agile Practitioner SaFE certification in 2020, then deploying it on large enterprise programs at Accenture, including a major transformation at a large equipment manufacturer with global operations. SAFe worked. For what it was designed to do, in the environment it was designed for, it genuinely worked. That’s important context for everything I’m about to say.

More recently, I’ve also deployed several production systems built entirely through agentic AI development. And those two experiences don’t fit in the same conceptual universe. Not because SAFe is poorly designed, but because it was designed for a world where the fundamental constraint was human capacity. That world is over.

What SAFe Was Built to Solve

To understand why agentic AI breaks SAFe, you have to understand what SAFe was actually solving.

Enterprise software delivery at scale has one chronic pathology: coordination failure. Teams build the right things in the wrong order. Dependencies get discovered at the wrong moment. Planning horizons collapse under the weight of organizational inertia. Work piles up at integration points because six teams delivered their pieces and nobody built the connective tissue.

SAFe’s genius was to create a synchronized heartbeat — the Program Increment, typically 8-12 weeks — that forces large organizations to align around shared commitments at regular intervals. PI Planning, the ART (Agile Release Train) structure, the dependency wall where teams physically map their interdependencies — these are elegant solutions to a real problem. They work because they match the natural rhythm of human teams: the time it takes to plan meaningful work, execute it with quality, and inspect the results.

The mental model underneath all of it is coherent and consistent: human teams are the atomic unit of delivery, human capacity is the primary constraint, and coordination is the primary risk.

That mental model is now broken. Not wrong. Broken. The frame can no longer take the load.

What Agentic AI Actually Changes

I’ve spoken in different forums about the cybernetic dimension of this shift — the principle that to govern a more complex system, your control mechanisms must match its complexity. That complexity-impedance idea nagged at me when I first started working in agentic development. Something felt viscerally wrong about applying agile discipline to this new operating reality, but I couldn’t put my finger on it. Then it landed: agentic AI doesn’t improve software delivery. It changes its physics.

In a SAFe program, a team of eight engineers might deliver fifteen to twenty story points per sprint. Planning, coding, reviewing, integrating — the human is the bottleneck at every stage, which is why the entire framework is organized around optimizing human coordination.

In an agentic system, a single developer directing AI agents can generate, test, and iterate on what would previously have been weeks of work in hours. This isn’t a 2x or 5x improvement. It’s a change in the order of magnitude — and more importantly, a change in the nature of the constraint. Recent analysis of public code repositories found that 4% of all GitHub commits are now written by AI — a trend accelerating with each model generation.

The bottleneck is no longer creation. The bottleneck is now human review chokepoints — the moments where a person must inspect, validate, and redirect before the next wave of generation runs. And this exposes the deepest structural flaw in applying SAFe to agentic work: SAFe was built to prevent under-delivery. Agentic AI’s primary risk is the precise inverse — over-delivery of plausible-but-wrong output.

The Four Assumptions That Break

Each of these deserves its own detailed treatment — and I’ll explore them in subsequent pieces. But here is the shape of each failure:

1. The Program Increment as planning horizon. PI Planning assumes reality is stable enough over 8-12 weeks that meaningful commitments can be made upfront. In agentic development, the codebase can structurally transform within days. You are not planning a sprint — you are steering a system that is continuously rewriting itself. A fixed planning horizon isn’t just inefficient; it’s incoherent.

2. Velocity and story points as units of work. Story points measure human effort. When an agent produces in one hour what a senior engineer would produce in a week, the unit of measure has lost its meaning. More dangerously, it creates a false sense of progress — velocity metrics spike while the actual governance question (is this output correct and safe to deploy?) goes unmeasured.

3. Human team capacity as the primary bottleneck. This is the deepest assumption in SAFe, and it’s simply no longer true in agentic contexts. The bottleneck has migrated to human judgment — specifically, the bandwidth available for meaningful review of machine-generated output. SAFe has no ceremony designed around this. No role dedicated to it. No artifact tracking it.

4. Linear dependency management. The PI Planning dependency wall is one of SAFe’s most powerful tools — teams physically mapping what they need from whom and when. In agentic systems, dependencies don’t live between teams. They live between context windows, between prompt contracts, between the implicit agreements that govern how one agent’s output becomes another agent’s input. The dependency wall has no column for that.

The Contrarian Arguments — And Why They Don’t Hold

“SAFe is configurable — just tailor it.”

This is the first line of defense from SAFe practitioners, and it’s not wrong in theory. SAFe explicitly encourages tailoring. The framework even has emerging AI guidance on its website. But this argument mistakes configurability for adaptability. The issue isn’t that SAFe lacks the right checkbox — it’s that the mental model underneath SAFe is so thoroughly organized around human-capacity-as-bottleneck that no configuration escapes it. You can tailor the ceremonies. You cannot tailor away the foundational assumption. The frame can’t take the load — and adding new bolts doesn’t fix the frame.

“Agentic AI isn’t mature enough to govern differently.”

My five production deployments say otherwise. So does the trajectory of every major AI lab’s output over the past eighteen months. The responsible position isn’t to wait for maturity — it’s to build governance that can evolve as fast as the capability. Waiting for agentic AI to “stabilize” before rethinking governance is like waiting for flight to become safe before inventing air traffic control. The sequence is backwards.

“SAFe has built-in quality practices — just apply them more rigorously.”

The difference between theory and practice is the punchline of too many jokes. Yes, SAFe’s built-in quality pillar exists. But traditional quality assurance validates output against a specification written by humans, at human speed, against human-comprehensible criteria. The “plausible-but-wrong” problem in agentic AI is categorically different: the output volume exceeds human review bandwidth, the errors are non-obvious, and the specification itself may have been generated by the same system being evaluated. You need new mechanisms, not more rigorous application of old ones.

“This is a culture and talent problem, not a framework problem.”

True, to a degree. Organizations that lack strong engineering culture will fail at agentic AI regardless of their framework. But cybernetic law doesn’t care about culture. If your control mechanism operates at human cadence and your system generates output at machine cadence, you have a structural mismatch. You cannot hire your way out of a mismatch between the speed of a system and the speed of its governor.

What We’re Actually Building Instead

I’m not claiming to have invented the new framework. I’m claiming that the new framework is being invented — right now, in production systems, by teams willing to fly the plane while figuring out the instruments. As incoming CTO at Code Éxitos I have established approaches that operate at two levels: human-in-the-loop (interrupting agents before they chase wrong paths, redirecting mid-flight, catching compounding errors before they propagate) and human-on-the-loop (meta-level analysis of prompt patterns, eval-based adjustments, governance that operates on the system rather than inside it).

Neither of these maps cleanly to a SAFe ceremony. Both are responses to the actual failure modes of agentic development — not the theoretical ones.

The aviation analogy I keep returning to with clients is this: the Wright Brothers had to be aviators, mechanics, and inventors simultaneously. Blériot and Curtiss took what the Wrights proved and began to systematize it. Lindbergh and Earhart proved the envelope. And eventually — through accumulated learning from what actually went wrong — flight became the safest mode of transportation in history.

We are in the Blériot phase. The Wright Brothers moment has passed — agentic AI development is real, it works, and it is already in production at organizations that understand what they’re doing. What comes next is systematization: building the instruments, the protocols, the governance structures that turn a pioneering practice into a reproducible one.

SAFe is not the answer to that challenge. It is, in its current form, a very good solution to a problem that is no longer the primary one. The organizations that recognize this early — that understand the bottleneck has moved from creation to governance, from under-delivery risk to plausible-wrongness risk — are the ones that will be competing at airplane speeds while their peers are still optimizing their rail networks.

This is the first in a series examining the specific SAFe assumptions broken by agentic AI. Future pieces will go deep on PI Planning horizons, the story point fallacy, and what human-on-the-loop governance actually looks like in practice. I also recommend reading The Silent Risk in Your Agentic AI Stack for deeper context.